Posted by Admin

February 23, 2026

Best Practices for Ethical AI Voice Cloning and Consent

A voice is a kind of shortcut to credibility. A single phone call has the ability to ease a parent, lock a deal, or drag an audience into a story before the first sentence has been uttered. The weakness is also a shortcut. In January 2024, robocalls to the voters in New Hampshire were made to seem to use an AI-generated voice clone of one of the political leaders, disincentivizing them to vote. The authorities investigated, and then federal regulators announced enforcement in relation to the spoofed AI robocalls.

In the same year, a security analysis indicated that there had been a dramatic increase in deepfake fraud in customer calls, and how quickly voices shifted from cool demo to abuse. Creators and brands sit in the middle of this shift. Voice cloning AI is not a choice. Consent isn’t a checkbox. Money, ads, sponsorships, or distribution also increases the legal risks. This post is purely and strictly educational and should not be regarded as legal advice. Let’s go deeper!

Understanding Voice Cloning and Legal Rights

Voice cloning tools are trained on audio patterns and produce speech that is similar to the speaker's voice. This may be a simple process, but when individuals mix up three voice objects, compliance becomes a messy affair.

- A Person’s Voice As Identity: A voice is a fingerprint. Even with new words, listeners connect them with a certain human. Their voice likeness rights and publicity-style come into play, particularly in marketing or monetization

- A Sound Recording As A Protected Asset: A recording is a fixed track, podcast, voicemail, narration, or song take. Al voice copyright issues can be raised by rights related to recordings, a licensing issue, and concerns about how the recording was acquired or reused

- An AI-Generated Voice Output As A New Recording: A new obsession, but if it re-creates identity to promote, suggest endorsement, contract-break, or was trained to do it without authorization, it will cause trouble

A practical way to think about compliance is a “rights stack”:

- Contract Rights: What you agreed to with a voice artist, client, platform, or distributor

- Recording Rights: Who owns the original audio, and who may use it again

- Publicity and Privacy Concepts: Advertisement or trade identity use

- Consumer Protection Rules: Standards of truthfulness, endorsement, disclosure, and deception

The core risk is not “AI exists.” It is the actual exposure when a fake voice is heard selling, convincing, or impersonating.

Copyright vs. Publicity Rights

A common trap that many creators resort to is this one: I can clone a voice because a voice is not copyrightable. Reality is more layered.

- Voice Alone Is Generally Not A Copyrighted Work: Copyright defends original authorship and, in audio, tends to stick to the recording and the underlying composition rather than the fact that someone sounds like themselves. That is why AI voice copyright issues frequently depend on the recording of training, scripting, music bed, or other protected content of the dataset.

- Sound Recordings Are Protected: When the audio used in training was a track that you are not in charge of, arguments can be directed at the rights to the recording, the licenses, and how the audio was replicated or utilized.

- AI Outputs Can Still Trigger Identity-Based Claims In Commercial Contexts: The Civil Rights Law of New York discusses the use of the name, portrait, picture, likeness, or voice of a living person, in advertising or trade without written permission, and provides penalties and civil remedies in other sections.

Examples:

- New York: N.Y. Civ. Rights Law §50 (criminal) and 51 (civil injunction/damages) concern the use of the name/portrait/picture/likeness/voice of a living person in advertising or trade without written permission.

- California: Cal. Civ. Code §3344 (Living persons: name/voice/signature/photo/likeness in advertising/merchandising without permission) and §3344.1 ( post-mortem rights to deceased personalities important to licensing of digital replicas/estate).

- FTC: The disclosure of endorsement is regulated in 16 CFR Part 255 (interpretation of FTC Act Section 5 deception provisions): material connection should be disclosed, and implied endorsement must be avoided.

Such differences are important: consent regulations, post-mortem treatment, and schedules differ. That is why there is often no single solution to the right of publicity AI voice query, and why the consent mechanism must have a clear scope and duration, and revocation mechanisms.

Recommended Read: Technical Challenges and Ethical Issues in AI Music Generation

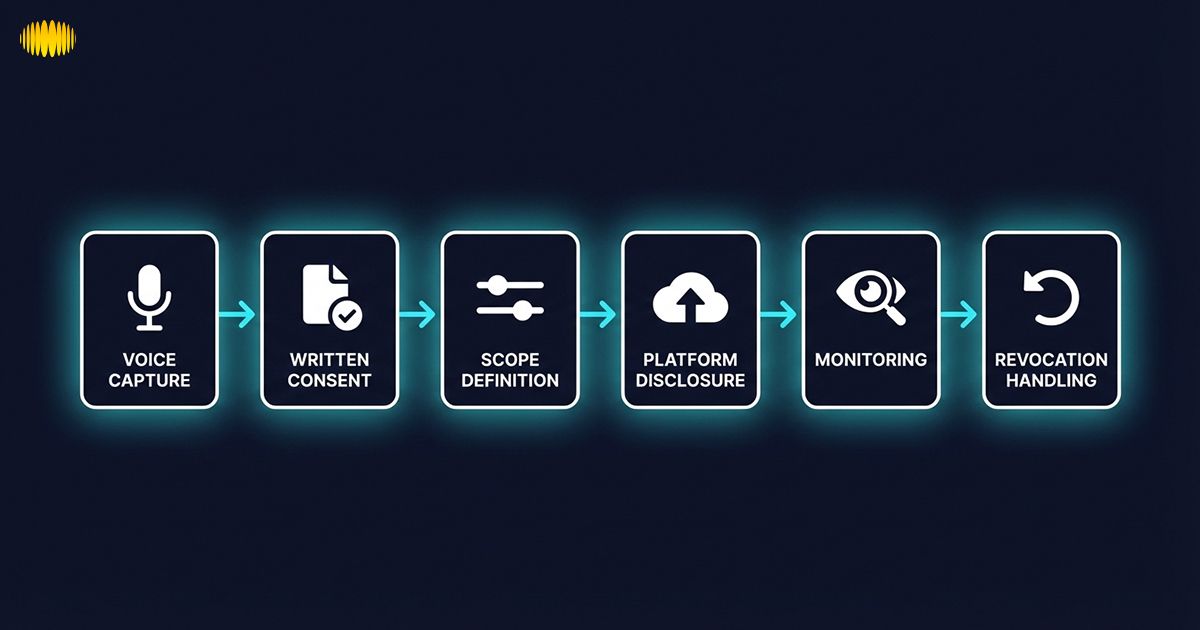

Consent Fundamentals for AI Voice Cloning

Ethical voice work begins with a basic foundation that people are in charge of their identity. The difficulty is to make that into a documentation that is tested in the field.

The validity of AI voice cloning consent concerns is likely to have five characteristics:

- Informed Consent: The individual comprehends the concept of cloning, the extent to which outputs can be realistic, and how models can be shared, and thus reaches further.

- Explicit Permission: The lack of explicit permission causes subsequent stress in chat. Written terms assure both parties, particularly in brand or platform review.

- Specific Scope: When the scope is loose, conflict ensues when new channels are discovered, including ads, audiobooks, games, or support.

- Time-bound Structure: A license can be permanent, terms have to be specified, and renewable.

- Revocable Design: The model, posts, takedowns, and permitted uses must have an operational plan to revoke.

Recommended Read: AI Song Lyrics Generator: How to Use AI To Write Better Song Lyrics (2026 Guide)

Downloadable Framework: AI Voice Consent Template (Educational Structure)

Follow this template as a rubric or consent form and have it examined by a qualified counsel. It is not a complete contract, but it traces out clauses that cause misunderstandings.

- Identity & Attribution: Voice provider, stage credits, limits on celebrity-like prompting

- Training Data & Access: Audio sources, model access, security, storage, deletion rules

- Scope of Use: Channels (social, ads, streaming, games), types of use (narration, chat, vocals), permitted mods (pitch, accent, tone)

- Duration & Renewal: Start/ end dates, renewal, expiry terms

- Exclusivity: Exclusive/non-exclusive, brand/political conflicts

- Compensation: Flat fee, royalty, rev share, subscription; audit-ready reporting

- Approval Rights: Pre-approval categories, outlaw uses, brand safety guardrails

- Disclosure: The labels of platform AI, metadata, and sponsor disclosures

- Revocation Clause: Activating, history of takedown, handling of post-revocation data

Constructed in this manner, a consent packet justifies AI voice cloning ethics by establishing the relationship: no assumptions, mutual scope.

Commercial vs. Non-Commercial Use

When the project earns or promotes, risk increases. Some of the commercial AI voice uses are advertisements, brand offers, affiliate marketing, sponsored content, product demos, and paid sponsorships, where the voice assists in generating revenue. Advertisements and sponsored placements are important in that they induce endorsement and advertising anticipations. The Federal Trade Commission issues recommendations on endorsements and disclosure, emphasizing that the disclosure should be based on the context and relationships that influence credibility should be apparent. This is why compliance with AI voice monetization compliance can not be a casual creative decision, but rather brand compliance. Even personal use requires approval: personal use may turn commercial, as the clips are reposted, boosted, or licensed. Rule: when the voice sells, persuades, or monetizes attention, it is commercial and should be recorded.

Recommended Read: How To Monetize AI-Generated Music Across Streaming Platforms

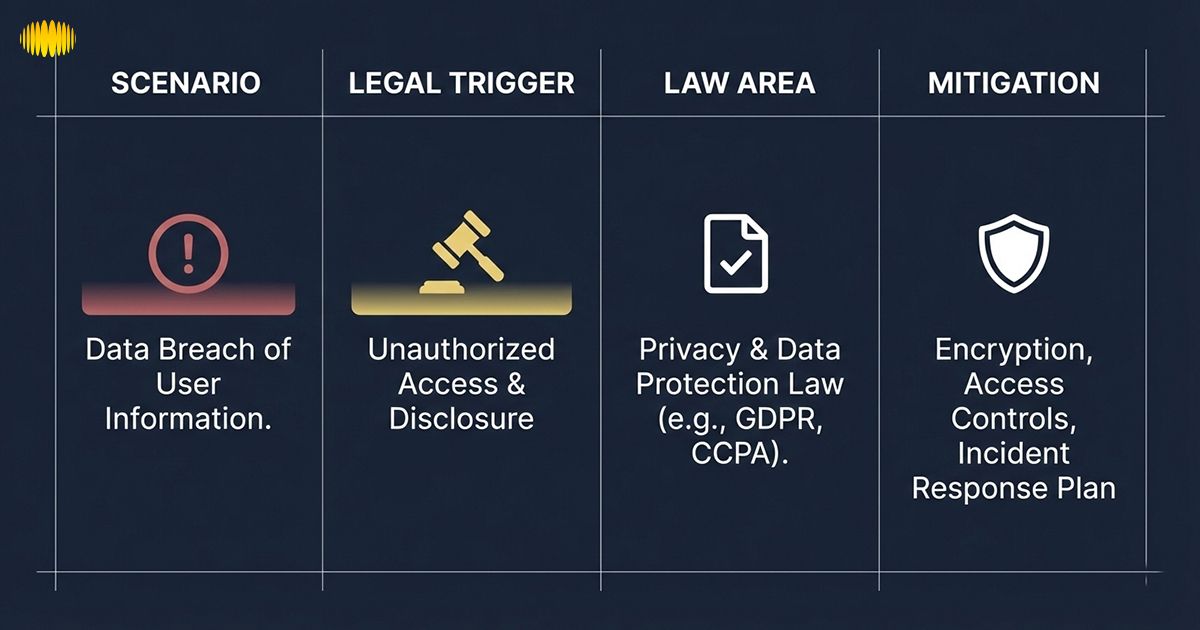

Deepfake Risk Scenarios and Legal Exposure

This part is not to instill fear in anyone. It demonstrates how conflicts develop and how to minimize tension at the beginning. All scenarios describe the risk trigger, probable area of rule, and mitigation strategy.

1. The Use Of A Celebrity-Like Voice In An Advertisement

Risk trigger: A synthetic voice that sounds like a familiar personality in the marketing industry occurs despite the lack of audio.Possible exposure: Right of publicity AI voice claims, implied endorsement, deepfake voice laws, and the problem of platform ad review. The New York and California laws refer to voice in a business.Mitigation strategy:

- Avoid imitation prompts

- Use licensed or non-recognizable voices

- Make the written consent consistent with the area, ad use, and time

- Add synthetic voice labeling where needed

2. Advertised TikTok with AI Narration Undisclosed

Risk trigger: Paid promotion does not have explicit sponsor disclosure and naturalistic AI audio.

Possible exposure: Enforcement of platforms, deception issues by the audience, and endorsement disclosure problems. TikTok provides the labeling requirements of AI-based realistic audio and ways to disclose them. Mitigation:

- Use platform synthetic labels

- Include disclosure of sponsors

- Keep consent records and scripts

3. Training A Voice Model On Scraped YouTube Clips

Risk trigger: Training on how to upload third-party material without authorization, then commercializing the cloned voice.Possible exposure: AI voice copyright issues over duplicated records, terms of use of the platform, and identity claims.

Case spotlight: Lehrman & Sage v. Lovo, Inc. (S.D.N.Y.) Vodcast actors Paul Lehrman and Linnea Sage claim Lovo acquired the recordings through gig websites under the guise of research, which it used to construct and market voice clones.

- The lesson to creators is the clarity of licenses: a license to record a performance does not necessarily imply a license to

- train a model on that audio

- reproduce a voice clone

- obtain a cloned voice that can be used again.

- Operational Fix: your consent packet must be explicit on training, synthetic voice generation, distribution/monetization, and must contain revocation + deletion steps, and logs on the provenance of the dataset.

Mitigation strategy:

- Keep dataset provenance files (source, license, consent)

- Use only authorized audio

- Specify scope (internal test vs product vs resale)

- Accept consent as a living document

4. Fraud Scam Using A Cloned Voice

Risk trigger: A fraudster poses as a relative, colleague, or official.

Possible exposure: Criminal fraud, harm to consumers, reporting on the platform, and regulatory compliance. The case of New Hampshire robocalls and the settlement with the FCC indicates the investigation of synthetic voice abuse at a high level.

Mitigation strategy:

- Use provenance signals or watermarking

- Build a takedown playbook

- Train teams on verification steps (call-back codes)

- Control and log model access

These situations relate the AI voice legal risks to practice. The fundamental message repeats: most chaos is removed by consent, disclosure, and documentation.

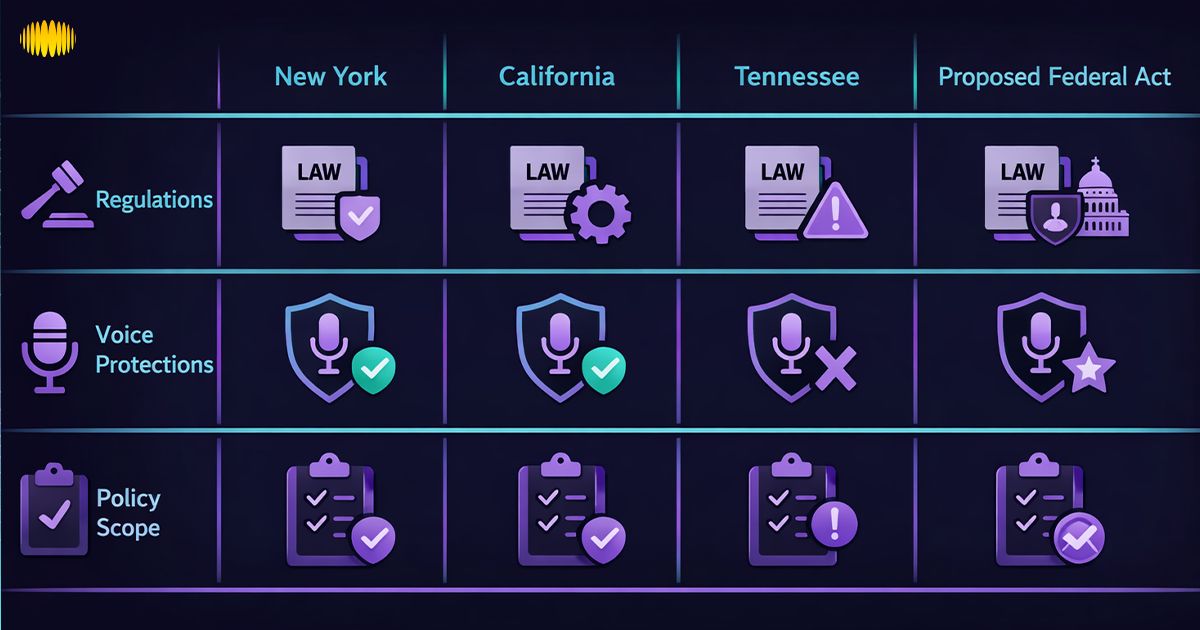

Emerging Laws and Industry Response

The regulation is changing fast, and creators should have an easier method of tracking highlights without reading through legal texts.

- Tennessee ELVIS Act (2024): Expands state publicity protections to better cover modern “voice/likeness” misuse tied to generative AI, strengthening claims around unauthorized imitation and commercial exploitation.

- NO FAKES Act: This No FAKES Act would create a national “digital replica” right for voice/visual likeness, paired with a notice-and-takedown style process and platform safe-harbor mechanics (remove/disable access when properly notified, with defined service/provider concepts). For creators, it signals a shift toward standardized consent + takedown workflows.

- State-by-State Expansion: More states are modernizing publicity statutes (especially around post-mortem rights), which matters if your content features deceased performers or “sound-alike” marketing.

- Union/Industry Guardrails: SAG-AFTRA agreements increasingly push for informed consent, compensation, and limits when a “digital replica” is created or when performances are altered using AI; contract terms are becoming as important as statutes for real-world compliance.

Artists do not have to remember all updates. Develop a compliance culture: pay attention to changes in the policy of the platform and maintain the correctness of consent words in relation to the real needs.

Recommended Read: Prompt Engineering for AI Music: Genre-Specific Tips & Templates

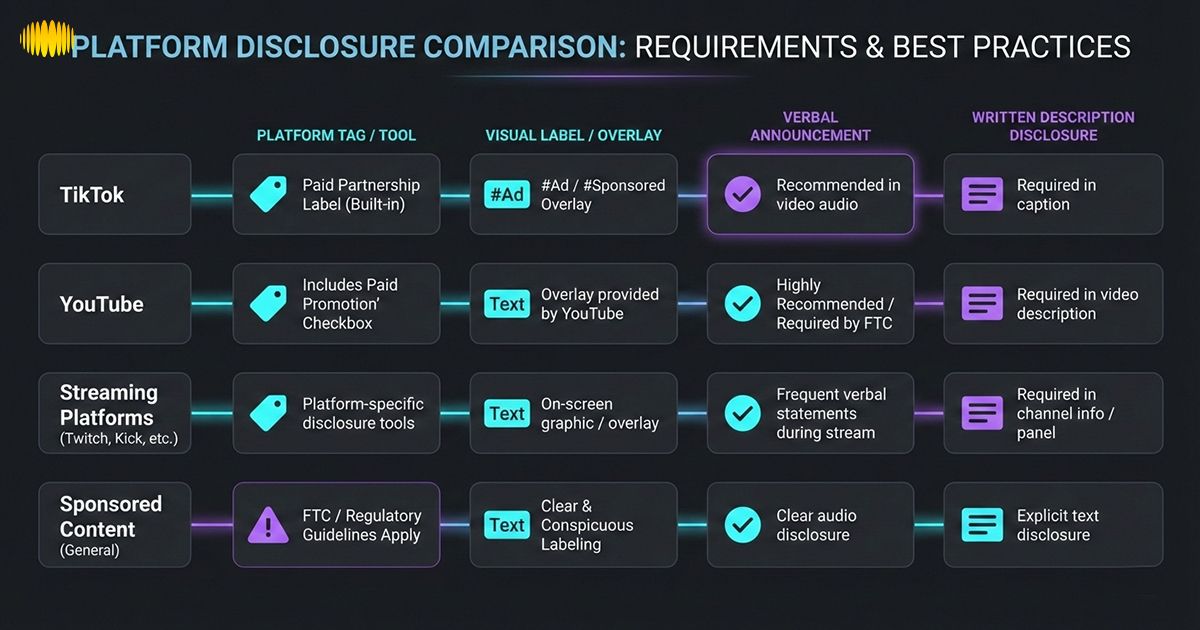

Platform Compliance and Disclosure Requirements

The policies of the platform influence results: a takedown, demonetization, a lowering of reach, or account friction under consideration, and AI voice disclosure requirements are not a last-minute addition, but rather a part of production. TikTok obliges the labeling of realistic AI-generated media to provide context. YouTube mandates disclosure of altered or synthetic realistic material as a way to promote transparency. Spotify only allows vocal impersonation when authorized by the artist and requires more explicit metadata disclosure.

Platform Disclosure Comparison Table

| Channel | What Disclosure Is Likely To Be Anticipated | Where It Appears | Common Trigger | Practical Creator Fix |

|---|---|---|---|---|

| Tiktok | Synthetic Voice Labeling On Realistic AI Audio | Platform Label, Sticker, Caption | False And Deceptive Realistic Synthetic Media | Insert The Ai Label Tools And The Plain-Language Caption Note |

| Youtube | Disclosure Of Modified Or Artificial Realistic Material. | How This Was Made Is An Indicator | True-To-Life AI Sound Or Images On Perception | Entitle Upload And Archive Project Notes Disclosure |

| Streaming Platforms | Impersonation Authorization + Metadata Signals | Metadata And Reporting Tools For The Distributors | Cloning The Voice Of A Legitimate Artist | Prepare Licenses, Include Metadata Disclosures, And Never Impersonate |

| Sponsored Content Rules | Open Disclosure Of Sponsors With Money Or Perks | On-Screen Text, Description, Caption | Sponsored Promotion With Credibility | Comply With The Advice Of Ftc Disclosures; Make It Visible And Understandable |

← Swipe horizontally to view full table →

Here, the creator economy compliance becomes actual. An artist can do what is morally right and still be flagged when there is a lack of disclosure cues.

Brand Protection and Risk Management

Brands and creators secure voices as visuals: obvious rights, limited reuse, and rapid identification.

- Contract Clauses Built For AI: The traditional voice contracts are narrow in terms of use, whereas AI extends the reuse. Include AI language training, derivative voices, edits, and re-licensing.

- Watermarking and Provenance: Watermarking (audible or inaudible) is not magic protection; it offers proof and traceability. Accompany it with a version log: model version, prompt, script, date, and channel to be used.

- Monitoring and Reporting: Have scans on unauthorized uploads or advertisements every month with the help of your voice. Ready to report platform and template emails.

- Brand Protection Playbook: Your voice is your brand: uniform naming, credits, and publishing rights defend against brand theft.

- Media Liability Insurance: In some cases, media liability insurance is taken by some creators and agencies to cover some claims and defense expenses, as per the policy and circumstances. It is not a panacea, but it can assist a wider risk plan with a skilled broker.

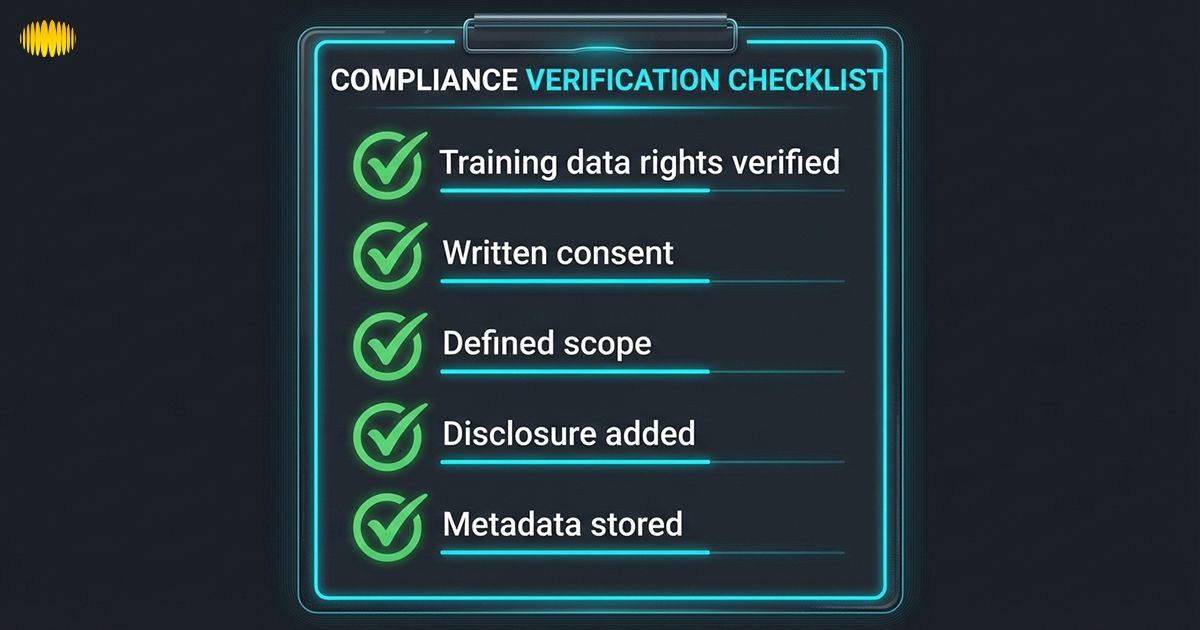

Checklist Of Compliance And Documentation Toolkit

This segment is created to integrate within your workflow solution and serve as your “audit folder” when a platform, partner, or voice artist protection has queries.

Compliance Checklist

- Verify training information rights, licenses, and consent formations

- Write or proofread your template of AI voice consent

- Channel and content type definition of scope

- Include a revocation condition, including a timeframe for a takedown

- Strategic compensation, reporting, and approvals

- Label platform and fulfill AI voice disclosure requirements

- Metadata of the store: version of the model, prompts, scripts, dates, and distribution list

- Check abuse; record the reports and actions

- Note all the data in one project folder

Sample Clause Excerpt (Educational Example, Not Legal Advice)

“Permission is only applicable to the listed Uses of the stated Duration. It must not be transferred and sublicensed without written terms. In case of revocation according to the Revocation section the licensee will cease further publication within the agreed period and observe the recorded procedure to erase and/or turn off accessibility to the model and associated resources.”

This provision does not attempt to resolve conflicts. It aims to prevent them.

Recommended Read: AI Songwriting: How to Write Songs Using AI (2026 Guide)

Monetization and Creator Economy Implications

Money distorts attention rules. Platforms are more rigorous in their screening of commercial content, audiences demand more rapid endorsement, and partners are more demanding.

An AI voice monetization compliance strategy involves:

- Royalties: Track usage; pay per stream, spot, or project

- Buyouts: One-time only, very narrow scope, short-term time

- Subscription Voice Packs: Just like software licenses with an absolute boundary.

- Sync Licensing: Build brand category, territory, and campaign value.

Social monetization and streaming may not work in the case of a lack of disclosure or authorization. Compliance safeguards revenue due to decreased takedowns, demonetization, and reluctance by partners.

How Sonygram Supports Ethical AI Voice Cloning and Consent Management

When it comes to ethical voice cloning, it is necessary to have systems that recognize the consent boundaries, use of documents, and minimize legal ambiguity prior to content becoming live. Sonygram.ai is used by creators to make the process of ethical voice cloning a repeatable operation, forming the core of the consent, scope, and traceability.

Key ways include:

- Pre-cloning authorization of the document voice

- Determine channel-based usage (ads, social, streaming)

- Access control to avoid reuse

- Maintain disclosure, audit, and royalty logs

- Minimize takedowns, affiliation friction, and repurposing disputes

Frequently Asked Questions (FAQ’s)

1. Can I Clone A Celebrity Voice?

The high risk is frequently manifested when the voice relates to a familiar face and the text sells or suggests approval. Both AI voice cloning ethics and AI voice legal risks are oriented toward licensed voices and a distinct mandate, as opposed to imitation.

2. What Protects My Voice Legally?

In some jurisdictions, this is often provided by voice likeness rights and state publicity laws, as well as contract rights and platform rules. California and New York laws directly mention voice in business.

3. Is Personal Use Safe?

It can still be harmful in personal use when the production is put into the market, sold, or presented as real. The less risky practice is based on informed consent and explicit restrictions on posting and reuse.

4. What Is the NO FAKES Act?

No Fakes Act is a proposed federal statute in the U.S forming a right behind the digital replicas of the voice or likeness of an individual, enforced by a notice and take-down policy.

5. Do I Need Written Consent?

AI voice cloning consent is a practice that is robust, especially in commercial use. The statute of New York refers to written permission to advertise or trade in the voice of a living person.

6. How Do I Disclose AI Voices?

Add the AI-content label on the platform and a clear and plain-language note (e.g., This voice is AI-generated); disclose any sponsorship or material connection.

7. What If the Person Is Deceased?

Each state has different rules on post-mortem rights, but mostly, you usually require authorization of an estate (e.g., California Civil Code §3344.1).

Conclusion

Cloning ethical voice is not anti-innovation but trust. The ideal AI voice cloning consent is informed, explicit, scoped, time-based, and operationally revocable. The bar is raised with commercial projects; disclosure, documentation, and unambiguous licensing should be a part of commercial AI voice use. Transparency secures revenue, and platforms and partners encourage transparency and punish misconceptions. The technologies in Sonygram scanning enable the support of ethics of AI voice cloning with tools and guidance that consent is infrastructure, not marketing copy.

Next steps: download the Consent and Compliance Checklist, learn the tools of an ethical voice such as Sonygram, and use consent-first compliance updates to subscribers.